Experiment 30

Enforce your dependency policy before AI agents or developers can commit it.

Your AI agent has a pyproject.toml problem

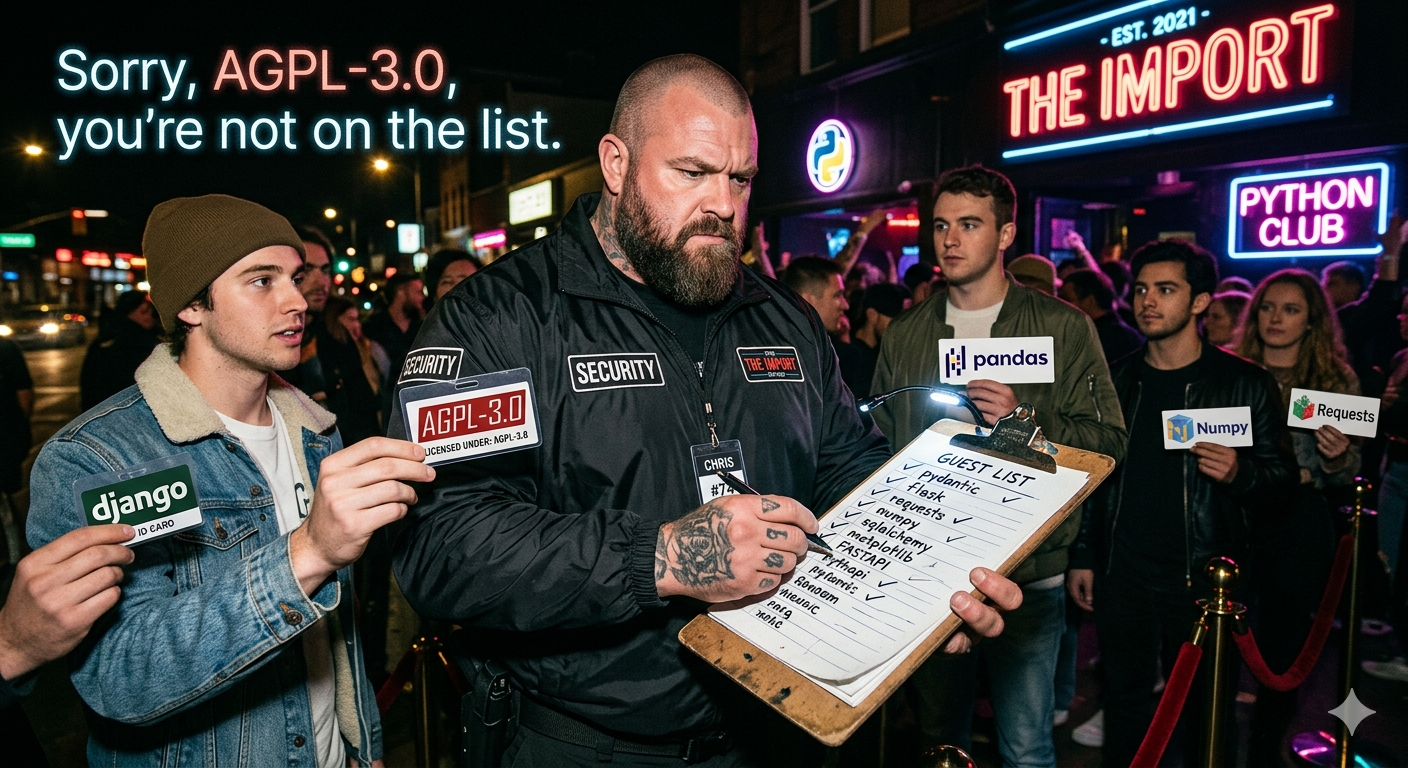

Your AI coding agent has been busy. While you were getting coffee, it cheerfully added requests-async-mega-turbo, definitely-not-malware, and cool-utils-2024 to your pyproject.toml. One has a critical CVE. One is AGPL-licensed and you ship proprietary software. One was banned by your legal team last quarter.

You won’t find out until PR review. Maybe.

This is a new kind of problem, and most of the tools you already pay for are the wrong shape for it.

Why existing tooling misses

Dependabot, Snyk, and FOSSA do excellent work — after a PR is open. That timing made sense when “adding a dependency” was a deliberate human act. A developer added a package; a tool flagged it on review; the developer pushed back or pulled it out. Cost of being late: one revert.

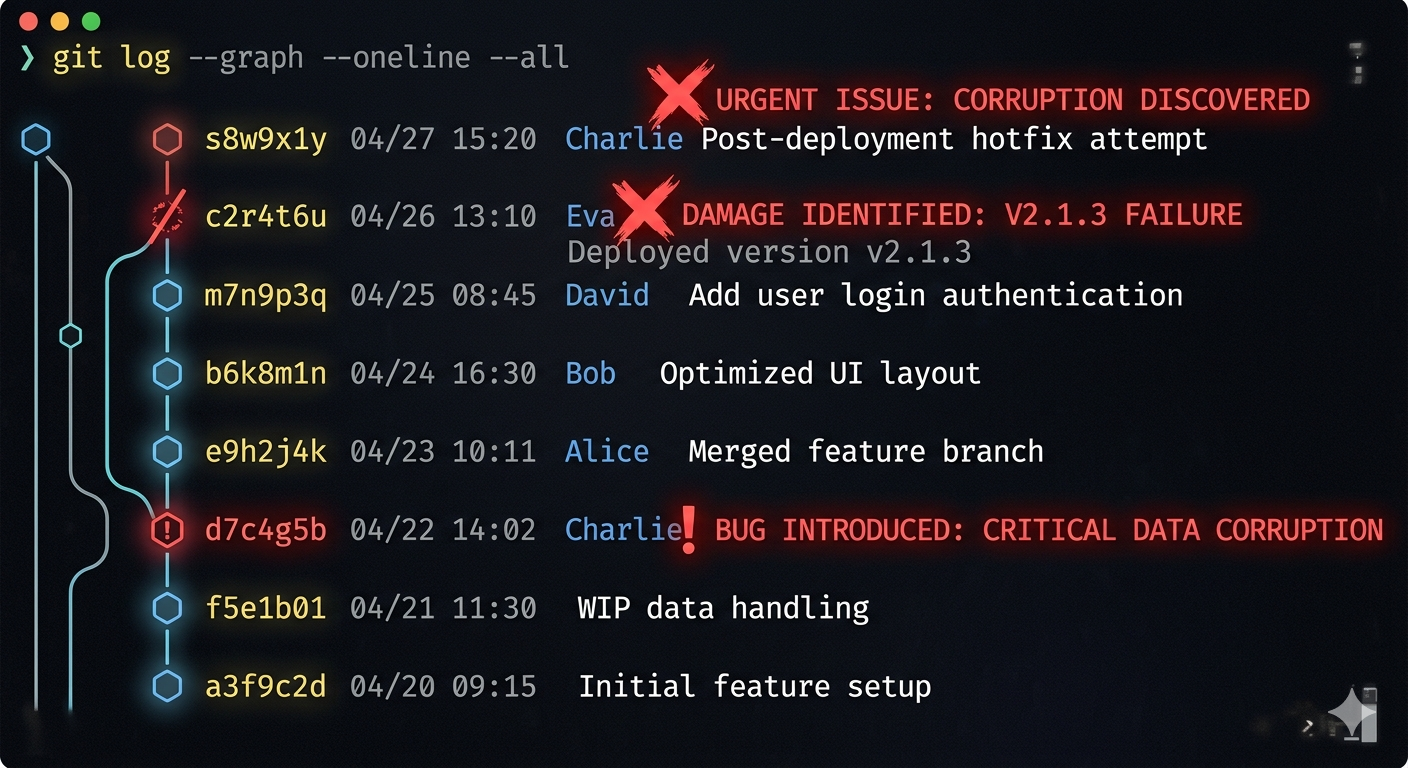

AI agents broke that loop. By the time the PR is open, the agent has already:

- picked the package,

- imported it across three files,

- written tests against its API,

- possibly refactored adjacent code to fit its idioms.

Telling the reviewer “this dep is banned” at that point means throwing out a chunk of work and asking the agent to re-plan. The cost of being late didn’t just go up — it changed kind. And the existing tools haven’t caught up because their architecture assumes a slower, more deliberate authoring loop than the one we actually have now.

Why agents need a different shape of answer

There’s a second, subtler problem: agents are bad at fuzzy answers.

A human reading “this package has license concerns, consider alternatives” knows what to do. An LLM reading the same string will sometimes paste it into a code comment and keep going. The signal a coding agent can actually act on is a closed enum — allowed, not allowed, replace with this — returned before the bytes hit disk.

That’s not a feature request. It’s a structural property of working with autonomous tools that take action on partial information. Policy delivered as prose is policy that won’t be enforced.

One answer

lex-align is one take on this: it checks every proposed Python dependency against your approved registry, OSV CVE feed, and license rules, and returns a clear verdict before the package lands in pyproject.toml. It’s narrow on purpose — Python only, direct dependencies, a single CVSS threshold, self-hosted. Those are choices, not gaps. The docs walk through install, hooks, and agent integration.

The piece worth pulling out is the philosophy of the verdict: closed enum, deterministic, returned before action. Whoever eventually builds the equivalent for JavaScript or Go will end up with the same shape, because the problem demands it.

Who should actually care

If you have AI agents committing to a Python codebase with any real license or security policy, and you currently find out about violations at PR review or later, this hole exists in your stack whether you’ve named it or not. lex-align is a specific, working answer to it.

If you’re on a polyglot stack, this particular tool isn’t yours yet — but the problem is, and watching how it gets solved in one language is a reasonable use of your time.

The honest question to leave with: do you actually know what your agents have installed this month?

If the answer is no, the problem is real even if the tool you end up using isn’t this one.